"Why Iteration is not Innovation"

Watch our recorded WEBINAR!Artificial intelligence hasn’t reach the singularity just yet — essentially, the point at which an artificial intelligence becomes a more adept problem solver than humans such that it can think independently, creatively and iteratively improve itself in the process (summarized in Digital Trends nicely: “Super-intelligent machines will design even better machines, or simply rewrite themselves to become smarter. The results are a recursive self-improvement which is either very good, or very bad for humanity as a whole”).

So where do we fall on the spectrum from zero to singularity? Well, somewhere in the middle. But the specifics of that middle are this — A.I. is very good at performing a single, defined task (like find your face in photos, identify cancer in MRIs, whatever). The more, better information fed to the A.I. system, the better it is at performing the relevant task. But what happens when the information you feed the system is bad? Or warped?

Well, we just found out what it can look like — feed a neural network the darkest corners of the Internet, and it will see death, destruction and depravity all around.

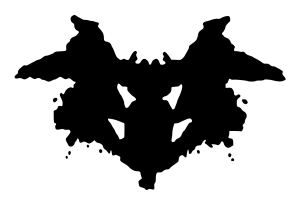

In a run-of-the-mill, human Rorschach test, you show a test subject an abstract, inkblot image and ask the respondent what they see. These tests are generally used to assess the state of a person’s mind, specifically whether or not they generally see the world in a positive or negative light.

In a run-of-the-mill, human Rorschach test, you show a test subject an abstract, inkblot image and ask the respondent what they see. These tests are generally used to assess the state of a person’s mind, specifically whether or not they generally see the world in a positive or negative light.

When you point a standard image-recognition neural network at inkblots — you know, the kind trained on photos of puppies — you almost always return positive, happy-vibed responses: “A group of birds sitting on top of a tree branch” for instance.

But Norman, an A.I. named for Hitchcock’s Psycho’s Norman Bates, wasn’t trained on images of puppies and babies. It was trained on image recognition far more dark and depraved, according to the BBC: “The software was shown images of people dying in gruesome circumstances, culled from a group on the website Reddit.”

Unlike ‘standard’ A.I. that saw cheery or innocuous objects, “Norman’s view was unremittingly bleak – it saw dead bodies, blood and destruction in every image,” the BBC continued.

Because Norman was, in effect, raised on the worst parts of the Internet, what he sees is pretty awful.

How sad for Norman.

We’ve written about inherent bias pervasive throughout all software systems and digital platforms at length previously. The basic idea is the biases the humans programming an algorithm bring into that programming will reveal themselves in every final calculation that neural network comes to.

That exact same scenario is true, except now it’s manifesting itself in what we feed the system, not just how we write the algorithm governing the system. That’s an entirely new, complex and interlinked variable in writing and designing ethical A.I.

The BBC article continued: “The fact that Norman’s responses were so much darker illustrates a harsh reality in the new world of machine learning, said Prof Iyad Rahwan, part of the three-person team from MIT’s Media Lab which developed Norman.”

“Data matters more than the algorithm,” Rahwan said to the BBC. “It highlights the idea that the data we use to train AI is reflected in the way the AI perceives the world and how it behaves.”

Societally, if we’re to create a technological world governed and empowered by ethical algorithms and A.I., we have to be ever-vigilant in not only how we program the algorithms, but the data we’re feeding neural networks.

Jeff Francis is a veteran entrepreneur and founder of Dallas-based digital product studio ENO8. Jeff founded ENO8 to empower companies of all sizes to design, develop and deliver innovative, impactful digital products. With more than 18 years working with early-stage startups, Jeff has a passion for creating and growing new businesses from the ground up, and has honed a unique ability to assist companies with aligning their technology product initiatives with real business outcomes.

Sign up for power-packed emails to get critical insights into why software fails and how you can succeed!

Whether you have your ducks in a row or just an idea, we’ll help you create software your customers will Love.

LET'S TALK