Not that long ago, a computer besting a human in a complex game of strategy and skill was nigh unthinkable. The best chess players in the world were far better at the ancient game than their silicon brethren. While chess is a beautiful and fluid game, and the best players employ creativity, unpredictability and gambles, it is ultimately quantifiable. By that nature, it was only a matter of time before computers got better than us at it.

Every piece in chess has an approximate point value assigned to it given its importance to the overall game, as well as the capabilities of that piece (in the classic mode of point assignments, a pawn is worth 1 point, knights and bishops are worth 3, rooks 5, and the all powerful queen nets you 9 points). Now, the true strength and importance of any piece is massively dependent on the style of game, position of that game, stage of the game, etc. But, it gives you a natural way to score a player’s advantage in any given situation.

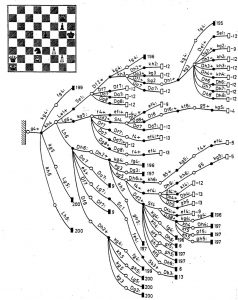

On top of that, there are strategic advantages a player can achieve based on the board and the position of pieces therein relative to one’s opponents. While chess provides a massive amount of possible game configurations from start to finish of unlimited games, on any given turn there are only so many moves you as a player can make. The very best players can assess their relative position and then simulate many, many moves in advance to achieve a positional or piece advantage in the future. It requires otherworldly focus and mental horsepower to run through so many decision trees in one’s head — each piece has a host of moves it can make, your opponent has a host of moves s/he can make in response, and on and on for a great many number of combinations.

One of the great advantages a human player had over computers in the beginning was twofold — one, a human brain is just a much more powerful computational tool than the earliest supercomputers. Two, humans are much better at prioritizing information than a computer is. One of the things that allows the best players to look 12 or more moves in advance is that grandmasters know certain moves or certain decision trees are dumb ideas, or that they’d never happen, so the grandmaster can skip the computation required to run out those decision trees while instead focusing on the decision trees far more likely to a) occur, and b) result in a victory. Because of these, the earliest games against computers went in favor of the top human chess players. But that wouldn’t always hold.

One of the great advantages a human player had over computers in the beginning was twofold — one, a human brain is just a much more powerful computational tool than the earliest supercomputers. Two, humans are much better at prioritizing information than a computer is. One of the things that allows the best players to look 12 or more moves in advance is that grandmasters know certain moves or certain decision trees are dumb ideas, or that they’d never happen, so the grandmaster can skip the computation required to run out those decision trees while instead focusing on the decision trees far more likely to a) occur, and b) result in a victory. Because of these, the earliest games against computers went in favor of the top human chess players. But that wouldn’t always hold.

Because of the computational nature of chess, there would come a time when computing power would rival that of the human brain. Eventually, you could throw enough CPUs and computational power at one specific task, and the computer would be able to out-compute humans. It was only a matter of time. If you’re requiring a computer to make calculations based on data input, the more processors you assign to that task, the faster it can make the computation. You keep shrinking the size of the processor and adding more and more and more to the computer architecture, you’re eventually going to have enough horsepower to rival or surpass a human.

Hardware architects passed that point a while ago. Nowadays, the top computer programs running on not even cutting-edge supercomputers can reliably beat the best of the best humans. There are only so many decision trees, so many historical games to study, so many combinations of moves from a given position, that a powerful enough computer can simply throw enough brute computing force at the “equation” and get an answer. It didn’t require creativity or transformational innovation to win this battle — the computer isn’t thinking in the traditional sense; it’s simply so powerful, it can compute every move in every decision tree possible from the current board and make a mathematical conclusion of what move gives it the greatest chance of achieving victory in the future.

The same cannot be said of Go.

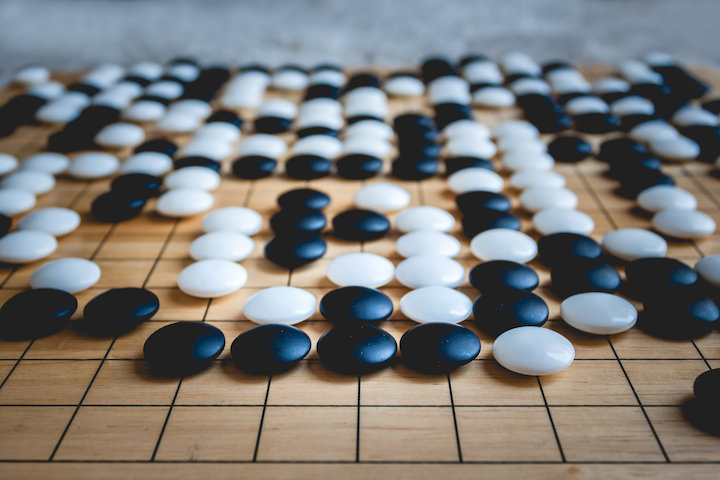

Go is an even more ancient game originating in China, described as “an adversarial game with the objective of surrounding a larger total area of the board with one’s stones than the opponent. As the game progresses, the players position stones on the board to map out formations and potential territories. Contests between opposing formations are often extremely complex and may result in the expansion, reduction, or wholesale capture and loss of formation stones.”

Each player has “stones”, one player playing black, the other white, and they place those stones at the intersection of lines on a 19×19 grid board. The reason it’s different than chess is the size and scope of the board and the sheer number of possible formations.

There are more possible positions in Go than atoms in the visible universe.

As such, beating a human in Go can’t be simply a computational task (at least not yet). There are far too many combinations of moves, formations and strategy to simply brute force the game against the very best human players. So, to win a game this difficult, Google went a different direction — it taught a computer to think for itself.

Google’s DeepMind outfit built AlphaGo, a neural network with the sole task of learning how to beat the very best human players in the world at Go. But, the sheer number of decision trees was so large, the computer system can’t just run every possible permutation of the game through a computational analysis to select moves — it has to be able to store massive amounts of previous games played, recognize patterns therein, remember which positions and moves most likely led to victory, and play accordingly. The computer had to study millions upon millions of top-level human games, catalogue and store them all, and then identify trends from those games at every move stage along the way. You can’t just compute your way through that with sheer force of CPUs — you have to teach the computer how to think and develop algorithms to help it better prioritize the most promising decision trees. So that’s what Google did.

The first incarnation of AlphaGo took on Lee Sedol — the best Go player of the last decade — last year, and won 4 games out of 5. While a smashing success for DeepMind, Sedol exposed a notable flaw in the system in his lone victory. So, Demis Hassabis, the CEO and founder of DeepMind, decided to rebuild AlphaGo to address that flaw.

In essence, they built the new neural network without a vast trove of human moves to parse. Instead, they trained it using only learnings from games in which the machine plays itself — another step in the progression toward AI that truly learns on its own. “AlphaGo has become its own teacher,” says David Silver, the project’s lead researcher to Wired.

It’s kinda like that scene from War Games in which a young Matthew Broderick has to trick a computer into playing tic tac toe against itself so the machine can learn there’s no winner in Global Thermonuclear war.

While War Games might have strayed into the realm of science fiction given the autonomy a computer system had over America’s entire nuclear arsenal, the scene is remarkably prescient when it comes to how neural networks are teaching computers to think.

Hassabis, to Wired, said of the new and improved AlphaGo:

“the new algorithms are significantly more efficient than those that underpinned the original incarnation of AlphaGo. The DeepMind team can train AlphaGo in weeks rather than months, and during a match like the one in Wuzhen, the system can run on just one of the new TPU chip boards that Google built specifically to run this kind of machine-learning software. In other words, it needs only about a tenth of the processing power used by the original incarnation of AlphaGo.”

So, DeepMind not only taught a computer to think (and dominate Go), but they’ve also figured out how to refine their algorithms and teaching technique such that the new AlphaGo can even more thoroughly dominate human competitors, while using 1/10 the computing power. And, as Alphabet and others harness these learnings and apply them to other complex problems requiring creativity, learning, and pattern recognition, it really is a brave new world of computing.

Jeff Francis is a veteran entrepreneur and founder of Dallas-based digital product studio ENO8. Jeff founded ENO8 to empower companies of all sizes to design, develop and deliver innovative, impactful digital products. With more than 18 years working with early-stage startups, Jeff has a passion for creating and growing new businesses from the ground up, and has honed a unique ability to assist companies with aligning their technology product initiatives with real business outcomes.

Sign up for power-packed emails to get critical insights into why software fails and how you can succeed!

Whether you have your ducks in a row or just an idea, we’ll help you create software your customers will Love.

LET'S TALK